WaveRNN is a vocoder, it can convert mel-spectrogram to wav file. In this tutorial, we will introduce you how to do.

WaveRNN

WaveRNN is built based on GRU. We can find a tensorflow version here. It can generate waveform from audio mel-spectrogram.

How to convert mel-spectrogram to WAV audio using WaveRNN?

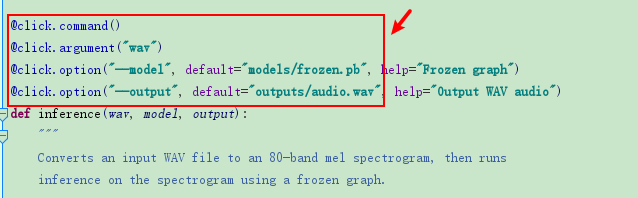

Open run_wavernn.py and remove all @click

In this file, the main function is inference(). In this function, it will do:

read audio data using librosa.load()

Understand librosa.load() is Between -1.0 and 1.0 – Librosa Tutorial

use compute_spectrogram() function to compute mel-spectrogram, we also can use librosa.feature.melspectrogram() to get:

Compute and Display Audio Mel-spectrogram in Python – Python Tutorial

Then, we can call run_wavernn() to create waveform using mel-spectrogram.

Finally, we will use librosa.output.write_wav() to save wave file.

However, you may encounter error: AttributeError: module ‘librosa’ has no attribute ‘output’ , you can find the solution here:

Fix AttributeError: module ‘librosa’ has no attribute ‘output’ – Librosa Tutorial

We can use inference() function as follows:

if __name__ == '__main__':

wav = r'samples/1221306.wav'

model = r'models/frozen.pb'

output = 'wavernn_1.wav'

inference(wav, model, output)Run this code, we will create a new wave file. However, the effect of the new wave file my be worse than origin. Because you should fine-tune wavernn model based on your own dataset.

We also can create new wavefrom using Griffin-Lim algorithm. Here is the tutorial:

Convert Mel-spectrogram to WAV Audio Using Griffin-Lim in Python – Python Tutorial